The Quality Transfer: Delegate 15 Hours Without Losing Standards for $55K–$75K Operators

For $50K–$70K/month founders and operators, the Clear Edge OS Quality Transfer installs documented standards, verification systems, and feedback loops so quality lives in systems instead of your head.

The Executive Summary

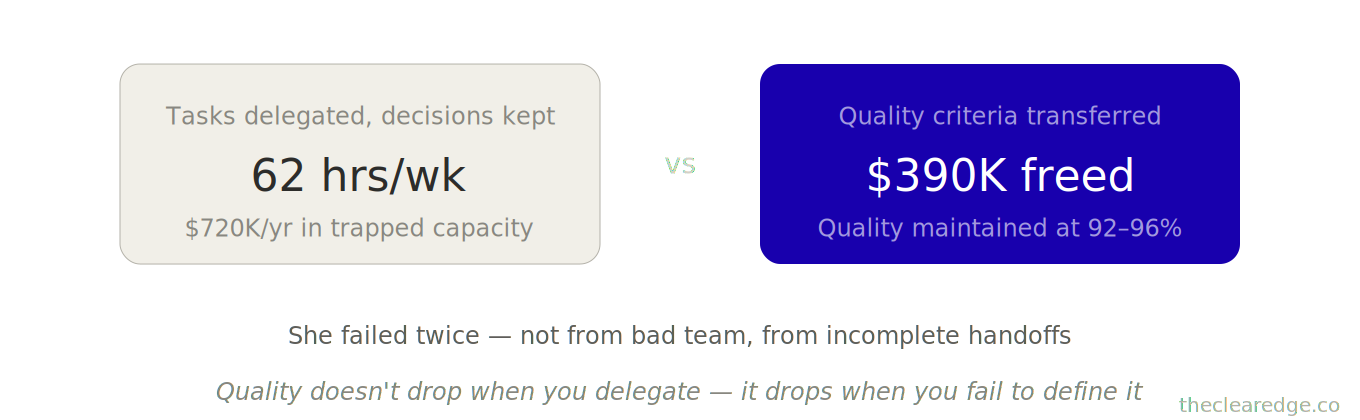

$50K–$70K/month founders quietly lose $390K–$720K in capacity after one bad handoff; the Clear Edge OS Quality Transfer lets you safely delegate 15 hours weekly without dropping standards.

Who this is for: $50K–$70K/month founders and operators working 60+ hours weekly who tried delegating once or twice, watched quality slip, and pulled 30 delegateable hours back onto their own plate.

The quality problem: Failed handoffs put $13,700 MRR at risk, trap you in 62-hour weeks, and leave 30 delegateable hours weekly idle—an annual capacity loss of $390K–$720K at a $500/hour founder rate.

What you’ll learn: The Clear Edge OS Quality Transfer Framework—Document Excellence, Build Verification Systems, and Create Feedback Loops—so your 18+ standards, checks, and reviews live in systems instead of your head.

What changes if you apply it: Revisions drop from 3 rounds → 1, design review shrinks from 20–25 → 6–8 hours/month, video checks from 2 hours → 12 minutes, and you safely delegate 15–30 hours weekly while holding 92–96% client satisfaction.

Time to implement: Invest 11–17 hours upfront and 3–4 hours monthly to maintain, typically unlocking 12–65 hours/month (worth $6K–$32.5K) and preventing $390K–$1.17M in three‑year capacity loss.

Written by Nour Boustani for $50K–$70K/month founders and operators who want to delegate 15+ hours weekly without slipping standards, losing clients, or hiding perfectionism behind “quality control.”

At $50K–$70K/month, one bad handoff already showed you the cost—upgrade to premium and install the Clear Edge OS Quality Transfer as a full system, not a concept.

› Library Navigation: Quick Navigation · The Clear Edge OS

Why Quality Drops When You Delegate At $50K–$70K/Month

You see the pattern long before you see the spreadsheet—delegation “fails,” standards slip, and the work snaps back to your desk.

Last month, I worked with a consultant at $64,000/month:

Serving 14 clients at an average of $4,570.

Working 62 hours weekly.

Client satisfaction was 94%.

She’d tried delegating before. Failed twice.

First attempt:

She hired a junior consultant to handle client deliverables.

Within 30 days, 3 clients complained about the quality of the work handled by the junior consultant.

She took the client deliverables back onto her own plate to protect quality.

$13,700 in monthly recurring revenue was at risk from quality issues introduced during the handoff.

Second attempt:

She hired a project manager to handle client communication.

Manager’s response times were fine, but tone was wrong—too casual with executives and too formal with startups.

Two clients mentioned the communication issues in their feedback calls.

She took client communication back onto her own plate to protect quality.

“I’ve proven I can’t delegate,” she said. “Quality always drops.”

Wrong diagnosis.

I reviewed both delegation attempts and saw the same pattern in each one. She handed off tasks without transferring her quality criteria, so her team guessed at what “good enough” meant—and their guesses were wrong.

First delegation failure breakdown:

She gave the junior consultant the instruction: “Handle the monthly strategy reports.”

She didn’t give the junior consultant her full quality package, including:

12-point quality checklist

3 client communication preferences

Escalation thresholds

Revision protocols

The junior consultant delivered reports that met baseline requirements but missed the client-specific context she always included.

Clients noticed the change and said the reports felt generic and less personalized than previous months.

The root cause was not incompetence by the junior consultant but an incomplete handoff of her quality standards.

Second failure breakdown:

She gave the project manager the instruction: “Manage client communication.”

She didn’t provide tone guidelines by client type, response-time expectations by urgency, or what counts as “urgent” vs “routine.”

The project manager delivered fast responses but with inconsistent tone.

Clients noticed the change and said communication felt off-brand.

The root cause wasn’t bad judgment from the project manager but missing standards.

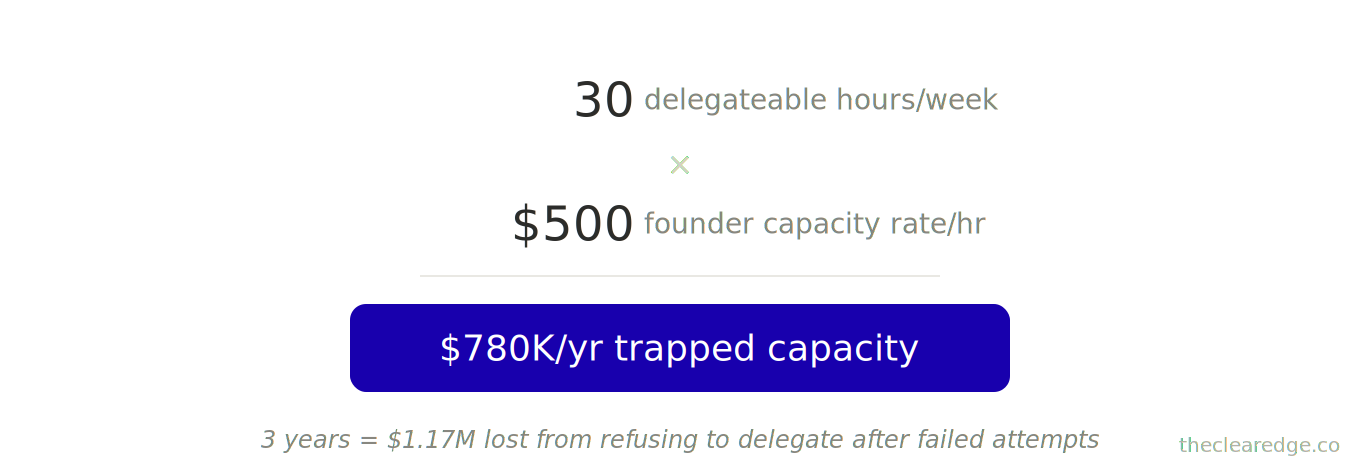

The math on her avoidance: 62 hours weekly, with 30 hours spent on work that could be delegated if quality standards were documented.

120 hours monthly created $60,000 in opportunity cost at her $500/hour capacity rate.

Over 12 months, that was $720,000 in lost capacity from refusing to delegate after failed attempts.

Her real problem wasn’t failed delegation. It was a failed handoff—she transferred responsibility but not the knowledge that keeps quality high.

We rebuilt her approach around one principle that changed everything—quality doesn’t drop when you delegate, it drops when you fail to define what quality means.

The Delegation Pattern That Destroys Quality In $50K–$70K Teams

This is the pattern across 67 businesses I’ve audited at $50K–$70K/month where founders delegate execution without documenting excellence criteria.

In these businesses, founders assume their team will “figure it out” or “ask if unsure” instead of spelling out standards.

Their teams don’t ask clarifying questions because they don’t know what they don’t know about the founder’s expectations.

Team members deliver work they believe is good based on their own internal standard of quality.

That work doesn’t meet the founder’s standard, so the founder takes the work back and stops delegating.

[Before]

"I know it when I see it"

|

v

[Designer Guesses]

|

v

[3 Rounds of Revisions]

20-25 hrs review / month

---

[After]

[18-Point Design Checklist]

|

v

[Designer Hits Clear Target]

|

v

[1 Round of Revisions]

6-8 hrs review / monthPattern 1: Implicit Quality Standards Trapped In The Founder’s Head

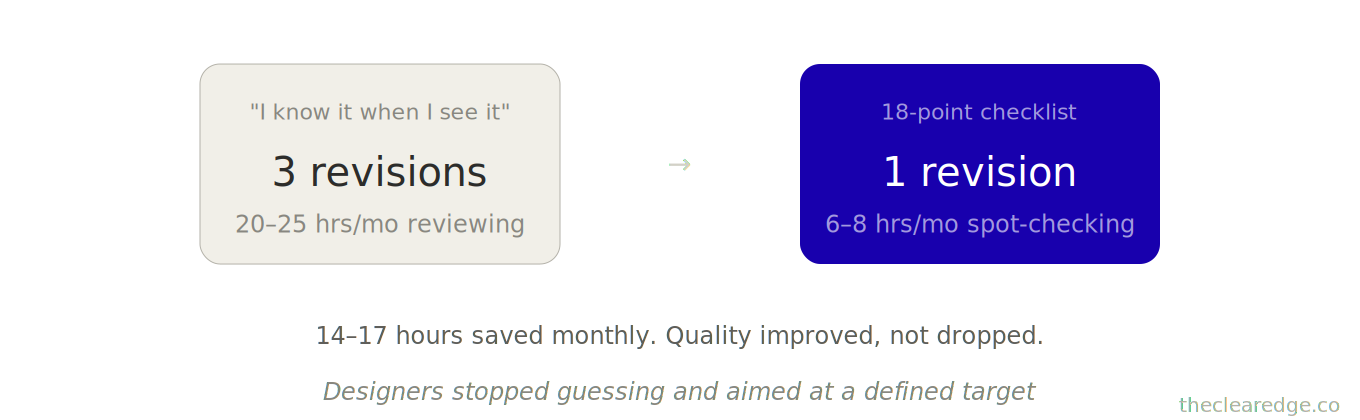

One agency owner at $68,000/month had 5 designers creating client work. She personally reviewed every design before client delivery—40–50 designs per month at 30 minutes each, adding up to 20–25 hours of review time every month.

I asked: “What makes a design ready for client delivery?”

She thought for 10 seconds. “It just... looks right. I know it when I see it.”

That’s the problem. “I know it when I see it” isn’t transferable.

Her designers were creating work, submitting for review, getting vague feedback like “this doesn’t feel right” or “can you tighten this up?”

Result:

3 rounds of revisions on the average design

Designers spent 30–40% of their time on preventable revisions

All because they didn’t know her standards upfront

I had her document what “ready for client” actually meant. She listed 18 specific criteria:

Brand colours match client guidelines (no eyeballing—use hex codes)

Typography hierarchy: max 3 font weights

White space: minimum 15% of canvas

Mobile responsiveness tested on 3 devices

Client logo placement: never bottom-right (founder preference learned from 200+ projects)

File naming convention: [ClientName_AssetType_Version_Date]

And 12 more

Once documented and shared with designers:

Revisions dropped from 3 → 1 per design

Her review time dropped from 20–25 → 6–8 hours monthly (spot-checking 20% of designs)

14–17 hours saved monthly → $7,000–8,500 in recaptured capacity

Quality didn’t drop; it improved because designers stopped guessing and started aiming at a clearly defined target.

Pattern 2: No Verification System So Founders Micromanage Everything

A $71,000/month course creator delegated video editing to a contractor. For the first 3 videos, she watched every second before publishing, spending 2 hours to review each 20-minute video.

“I need to check quality,” she said.

But her “quality check” was an exhaustive review, not targeted verification. She was checking everything because she hadn’t defined what mattered most.

I asked: “What are the 3 things that would make you reject a video?”

She listed:

Audio sync issues

Branding elements missing (intro/outro)

Content cuts that change meaning

Those 3 things could be verified in 8–10 minutes, not 2 hours. We built a verification protocol:

Spot-check 3 random timestamps for audio sync

Verify branding at 0:00 (intro) and end (outro)

Review transition points between topics (where cuts are most likely to cause issues)

Her review time dropped from 2 hours → 12 minutes per video.

Quality stayed at 96% (measured by student feedback scores).

14 videos monthly → 26 hours saved → $13,000 in recaptured capacity.

The pattern: Founders confuse “checking everything” with “ensuring quality.” You don’t need to review every detail—you need to verify the details that matter most.

Knowing The Framework Isn’t Running It

You now understand the Clear Edge OS Quality Transfer on paper; the toolkit is what sequences, tests, and enforces it in your week. Upgrade to premium if you’re ready to run it.

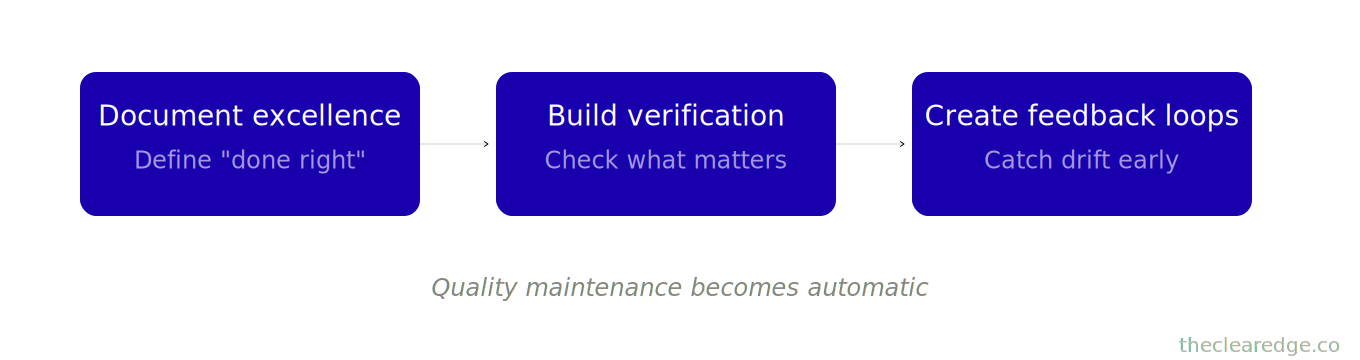

The Clear Edge OS Quality Transfer Framework For Delegating 15+ Hours Safely

Here’s the system that lets you delegate without standards dropping.

Most founders think quality control means reviewing work, but real quality control is building standards into the handoff so the work rarely needs review at all.

The Quality Transfer Framework:

Move 1: Document Excellence — Define what “done right” looks like with specificity

Move 2: Build Verification Systems — Check what matters without checking everything

Move 3: Create Feedback Loops — Improve standards when quality drifts

Each move removes one failure mode.

Together, they make quality maintenance automatic.

Move 1: Document Excellence To Define Transferable Quality Standards

Most quality drops happen because “good work” isn’t defined clearly enough to replicate.

A $59,000/month consultant had a senior associate handling client reports. Reports were fine, not great.

Clients didn’t complain, but the renewal rate dropped from 89% to 81% over 6 months.

I compared her reports to the associate’s. Both covered the required topics and included the necessary data, but hers had something his didn’t: anticipatory insight, where she predicted client questions and answered them before they were asked.

“How do you know what questions to anticipate?” I asked.

“Experience. After 200 clients, you see patterns,” she said.

“Can you teach the patterns?” I asked.

“I... haven’t tried,” she admitted.

We spent 3 hours extracting her pattern recognition into teachable criteria. She listed 8 types of anticipatory questions she always made sure to address.

“What does this mean for next quarter?” (forward implication)

“Why did this metric move?” (causation, not just correlation)

“What should we do differently?” (actionable recommendation)

“What’s the risk if we don’t act?” (consequence framing)

“How does this compare to industry?” (context benchmark)

“What’s the priority order?” (sequenced action)

“Who needs to be involved?” (stakeholder identification)

“What’s the timeline?” (implementation schedule)

She turned this into a report-quality checklist. The associate used the checklist on the next 3 reports, and client feedback improved immediately.

One client said, “These reports feel more strategic than before.”

Renewal rate: 81% → 87% over next 90 days.

The mechanism is simple: excellence isn’t magic, it’s pattern application. When you document the patterns that separate good from great, your team can replicate excellence without guessing.

What to Document So Quality Survives the Handoff

Technical standards:

Specifications (dimensions, formats, parameters)

Accuracy requirements (margin of error, precision level)

Completion criteria (what “done” looks like)

Tool/platform requirements (software versions, settings)

Judgment standards:

Decision frameworks (when to choose A vs. B)

Priority hierarchies (what matters most when trade-offs are required)

Escalation triggers (when to ask vs. decide)

Quality thresholds (acceptable vs. excellent)

Client-facing standards:

Communication tone (formal vs. casual, by context)

Response time expectations (by urgency level)

Presentation format (structure, visuals, length)

Brand voice consistency (word choices, style)

Edge case protocols:

Unusual situations and how to handle them

Exception approvals (who decides what)

Failure recovery (what to do when things go wrong)

Client-specific preferences (documented quirks)

Implementation:

A $66,000/month agency owner spent 6 hours documenting quality standards for client onboarding.

Created:

22-point onboarding checklist

4 communication templates (kickoff, week 1 check-in, week 2 milestone, week 4 review)

6 escalation scenarios with handling protocols

3 client type profiles (corporate, startup, solo founder) with tone guidance

She handed the package to the account manager in a 2-hour training session. The account manager then ran the next 8 onboarding sessions independently.

Client satisfaction on onboarding:

94% before delegation

96% after delegation, driven by more consistent experience and faster response times

Time saved: 12–16 hours monthly → $6,000–8,000 in recaptured capacity.

Investment:

8 hours (documentation + training)

Payback: 1 month

Ongoing return: 12–16 hours monthly forever

The pattern: Documentation feels like overhead until you calculate the opportunity cost of not doing it.

You’ve seen what happens once standards are finally explicit; now we’ll decide where you still need your eyes and where a smarter verification system is enough.

Move 2: Build Verification Systems To Protect Quality Without Micromanaging

Once standards are documented, you need verification that catches issues without becoming micromanagement.

A $73,000/month consultant delegated proposal creation to an associate. For the first 4 proposals, she reviewed each section before sending, spending 90 minutes per proposal.

“I need to make sure everything’s right,” she said.

But “everything” included sections that rarely had issues:

Company background: copied from template, 2% error rate

Service description: standardized, 3% error rate

Timeline: formula-based, 1% error rate

Pricing: required her approval anyway, 0% error rate

Custom recommendations: unique per client, 31% error rate

Case study selection: judgment call, 24% error rate

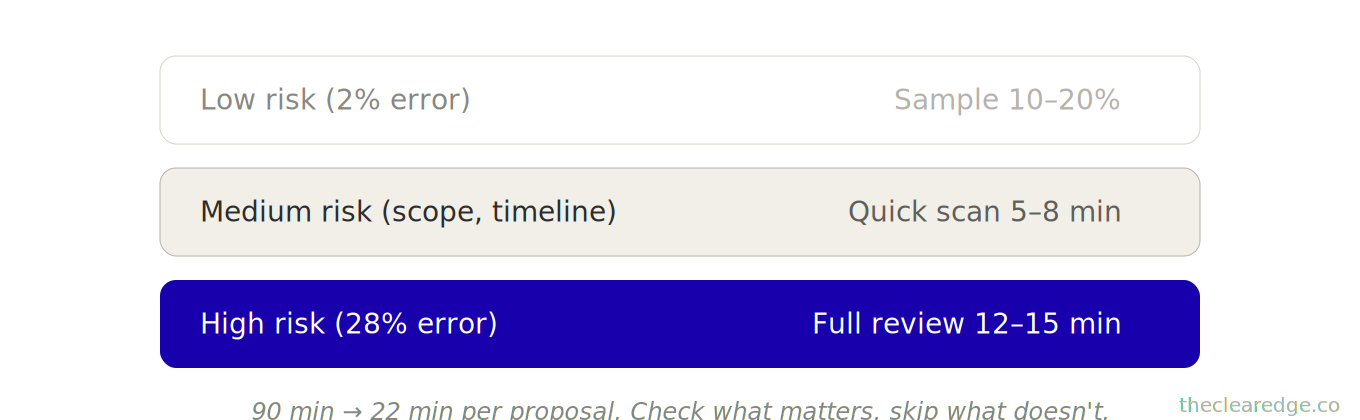

She was spending 25 minutes on low-risk sections with a 2% average error and only 20 minutes on high-risk sections with a 28% average error. Wrong allocation.

The fix: Risk-based verification

We built a 3-tier review system:

Tier 1 (Low-risk): Spot-check 10% randomly

Company background

Service descriptions

Standard sections

If an error is found, review the category fully and retrain

Tier 2 (Medium-risk): Review 100%, but quickly

Timeline

Project scope

Deliverables list

Check for logic errors, not style. 5–8 minutes per proposal

Tier 3 (High-risk): Review 100% carefully

Custom recommendations

Case study fit

Client-specific content

Her expertise adds the most value in this review. 12–15 minutes per proposal

Result:

Review time: 90 minutes → 22 minutes per proposal

4 proposals monthly → 272 minutes saved → 4.5 hours monthly

Quality maintained at 98% (client acceptance rate)

The mechanism: Not all work carries equal risk.

High-risk areas need full verification

Low-risk areas need sampling

Medium-risk areas need quick scans

Verification System Types for Delegated Work

Random sampling: Use for high-volume, low-variation work.

Customer support responses

Social media posts

Data entry

Random sampling rules:

Check 10–20% of items randomly.

If the error rate is above the threshold (typically 5%), increase sampling or add training.

Increase sample size when a new person starts, then decrease it as their competence is proven.

Checkpoint verification: Use for multi-step processes where early errors cascade.

Design work

Content creation

Technical projects

Checkpoint verification rules:

Review work at 2–3 key checkpoints before completion.

Catch issues early when fixes are still cheap to resolve.

Let final output rarely need review if all checkpoints have passed.

Outcome verification: Use for work where process doesn’t matter, only results.

Lead generation

Sales

Marketing campaigns.

Outcome verification rules:

Don’t review how they did the work, verify the end metrics.

Focus on conversion rates, quality scores, and client satisfaction.

Intervene only when outcomes drift from the target.

Peer verification: Use when you have multiple team members at a similar level -

Developers

Designers

Writers

Peer verification rules:

Team members review each other’s work before it reaches you.

Catches 80% of issues; you catch the final 20%.

Builds team judgment, reduces your load.

Implementation example:

The $71,000/month course creator (mentioned earlier) had 2 contractors editing videos. Built a peer review system:

Contractor A edits the video.

Contractor B verifies the video against the checklist, then they publish it.

She spot-checks 15% randomly.

If a quality issue is found, both contractors review the example and update their understanding.

Result:

Her review time: 26 hours monthly → 4 hours monthly.

Video quality: 96% maintained.

Contractors developed better judgment (could catch each other’s mistakes).

22 hours monthly saved → $11,000 in capacity.

The pattern: Verification systems scale quality without scaling your time. You check less but catch more because you’re checking what matters.

You now have risk-based verification that protects quality in the moment; the next move is building feedback loops that keep those standards from decaying over 3–6 months.

Move 3: Create Feedback Loops To Stop Quality Drift Over Time

Even with documentation and verification, quality will drift over time. Teams get comfortable, shortcuts emerge, standards blur.

A $69,000/month agency delegated client delivery to 3 account managers.

First 60 days: quality held at 93% client satisfaction.

By day 120: dropped to 86%.

By day 180: 82%.

The drops weren’t dramatic, but they were steady. Clients were staying, yet renewals were getting harder to secure.

I audited 20 recent deliverables against the original quality standards. Found:

15% missing elements from the original checklist

28% cut corners on documentation (less detail than required)

41% delivered on time but rushed (quality degraded in the final 20% of work)

The team hadn’t intentionally lowered standards. They had optimized for speed without noticing the quality trade-offs, and over 6 months their idea of “good enough” had quietly shifted downward.

The fix: Calibration sessions

Monthly 60-minute meetings where they:

Reviewed 3 recent deliverables (1 excellent, 1 acceptable, 1 below standard)

Discussed what made each one its level

Updated the quality checklist based on new issues discovered

Team committed to one quality improvement focus for next month

Result:

Client satisfaction: 82% → 91% over 90 days

Quality drift caught early before clients churned

Team alignment on standards (everyone understood “excellent” the same way)

The mechanism

Quality is a moving target.

Client expectations evolve.

Team interpretation shifts.

Feedback loops recalibrate before drift becomes a crisis.

Spot audit + debrief: Monthly, review 5–10 completed deliverables against standards and discuss gaps with the team, updating standards if needed.

Time: 60–90 minutes monthly

Catches drift early

Client feedback review: Quarterly, analyze client satisfaction data for patterns; if a specific quality dimension is declining, investigate the root cause.

Time: 45 minutes quarterly

Identifies blind spots

Team quality retrospective: After major projects, the team discusses: What went well? What would we do differently? What should we add to standards?

Time: 30 minutes per major project

Captures lessons learned

Exception analysis: When quality issues arise, document: What happened? Why? How do we prevent repeats? Update standards and training.

Time: 20 minutes per exception

Turns failures into improvements

Implementation:

That $59,000/month consultant built monthly calibration sessions with her associate:

She selected 2 reports she’d written and 2 reports the associate had written.

Together, she and the associate walked through what made each report strong or weak.

The associate practiced giving feedback on her reports to build his judgment.

They updated the report quality checklist with the new criteria they’d identified.

Result after 6 months:

Associate’s reports now rated 94% by clients (her reports: 96%)

The gap closed from 11 percentage points → 2 percentage points

She could delegate 90% of reports confidently

18 hours monthly saved → $9,000 capacity reclaimed

The pattern: Feedback loops prevent quality from decaying because they regularly recalibrate what “excellent” means and keep standards aligned with reality over time.

[Deliverables Go Out]

|

v

[Spot Audits + Client Data]

|

v

[Find Drift or Gaps]

|

v

[Update Standards + Checklists]

|

v

[Team Calibration Session]

|

v

[Next Cycle at Higher Standard]You’ve got standards, verification, and feedback loops in place; the last constraint is internal—where perfectionism quietly overrides your own acceptable 90–94% target.

Perfectionism Masquerading as Quality Standards at $50K–$70K/Month

The biggest barrier to delegation isn’t quality standards—it’s founders who confuse perfectionism with excellence.

A $67,000/month founder told me she couldn’t delegate because “no one will care as much as I do.” I asked to see the work she’d rejected recently.

She showed me a report her team member created. I compared it to her version.

Her version: 97% client satisfaction

Team version: 94% client satisfaction

Difference: 3 percentage points

Time investment:

Her version: 4.5 hours

Team version: 2.8 hours

The math: She spent 1.7 additional hours to improve satisfaction by 3 points. At her $500/hour rate, she paid $850 for 3% improvement.

The client wouldn’t pay more for that extra 3%, and most wouldn’t even notice the difference.

“But I notice,” she said.

That’s perfectionism, not standards.

The test: If client satisfaction is 94% and you can get 97% by investing 60% more time, is that trade worth it?

Usually, no—unless you’re in a domain where 97% is a competitive requirement (rare at this revenue stage).

The fix: Define “acceptable” vs. “excellent” explicitly

We documented two quality levels:

Acceptable (90–94%): Team can deliver, requires spot-checking

Excellent (95%+): Requires her direct work or extensive team oversight

For 80% of deliverables, “acceptable” was the target. She invested her time in the 20% where “excellent” mattered (new client onboarding, high-stakes presentations, strategic recommendations).

Result:

She delegated 15 deliverables monthly that previously required her time

Quality averaged 92% (vs. her 97%, acceptable gap)

67 hours monthly saved → $33,500 in capacity

Invested the saved time in business development and landed 2 new clients, generating $11,400 in new monthly recurring revenue.

ROI:

Accepting 5% quality reduction

Freed time worth $33,500

That time generated $11,400 new MRR

$11,400 × 12 = $136,800 annualized

The perfectionism was costing her $100K+ that could’ve gone into growth, assets, or time off.

You’ve probably felt this too—knowing your team’s work is “good enough” but still feeling compelled to push it toward “perfect.” That isn’t standards enforcement; it’s control dressed up as concern for quality.

[Quality Level?]

|

------------------------

| |

v v

[Acceptable 90-94%] [Excellent 95%+]

80% of work 20% of work

Team owns Founder owns

Spot-check only Deep involvementWhat Changes When You Install The Quality Transfer (And The Cost If You Don’t)

Implementation time:

Week 1–2: Document standards for 3–5 high-frequency deliverables (6–10 hours)

Week 3: Build verification systems (3–4 hours)

Week 4: Set up feedback loops (2–3 hours)

Total: 11–17 hours initial investment

Ongoing maintenance:

Monthly calibration: 60–90 minutes

Quarterly reviews: 45 minutes

Exception handling: 20 minutes per issue

Total: 3–4 hours monthly

What you get:

At $64,000/month with 30 delegateable hours weekly:

Transfer 50% of delegateable work (15 hours weekly)

15 hours × 4.33 weeks = 65 hours monthly

At $500/hour capacity → $32,500 monthly → $390,000 annually

Quality maintained at 92–96% (vs. founder’s 96–98%)

Client satisfaction impact: negligible (2–3 point difference rarely noticed)

Cost of not building the Quality Transfer framework

Staying in execution mode means:

65 hours monthly spent on work others could handle → 780 hours yearly

At $500/hour → $390,000 annual opportunity cost

Plus: burnout risk, inability to take time off, revenue ceiling at founder capacity

Over 3 years: $1.17 million in lost capacity from refusing to delegate after quality fears.

You’ve seen the full time, capacity, and $1.17 million cost curve on avoiding delegation; the next step is picking one concrete deliverable and running the Quality Transfer on it.

Delegation Fear Costs More Than Quality Drift

If you keep avoiding delegation after one bad handoff, you’re not protecting quality—you’re choosing to burn $390K–$1.17M in trapped capacity instead of fixing the handoff; start the system, not another 62-hour week.

Run The Quality Transfer Quick-Gate Checklist

Next time you’re about to keep a deliverable instead of delegating it, pull this and move through each gate.

☐ Listed the deliverable’s technical, judgment, client-facing, and edge-case standards using your existing Quality Transfer criteria for this specific work.

☐ Checked whether this deliverable fits your “acceptable 90–94%” band or truly requires “excellent 95%+” before you touch it yourself.

☐ Scored which Move you’re missing most here—Document Excellence, Verification System, or Feedback Loop—and wrote the single fix needed to delegate it.

☐ Compared the time you’d spend lifting quality from 92–94% to 96–98% against your $500/hour capacity and logged the real cost for this pass.

☐ Logged whether this review stayed inside your agreed 12–22 minute verification window instead of slipping back into full rework mode.

Five minutes now saves the next 65 undelegated hours and $32,500–$60,000 that would otherwise stay trapped in your week.

Where to Go From Here: Install Quality Transfer And Unlock Delegated Capacity

If you are in the $55K–$75K/month band and still hand-checking everything, you’re donating five to six figures of capacity to avoid a 2–5 point quality gap. The drag isn’t your team’s talent—it’s the leak between your standards and their execution.

From here, run the sequence once:

Document excellence for 3–5 high-frequency deliverables so “done right” is concrete, repeatable, and ready to hand off without quality crashing.

Build risk-based verification and peer review so you check what matters, cut review time by dozens of hours, and keep quality in the 92–96% band.

Install monthly calibration and exception feedback loops so small drifts get corrected fast and renewal, satisfaction, and revenue stay locked in.

This is the shift where The Quality Transfer becomes your permanent fix for the delegation leak, not another one-off sprint.

Your Turn: Run the Quality Transfer on One Deliverable this Week

What’s the one deliverable you handle that you know someone else could do—if only you could transfer your standards?

Pick one. Document the quality criteria this week.

Fifteen minutes daily for 5 days is 75 minutes total. That’s your starting point.

Drop your answer below. I read every reply.

And if you can’t identify what makes your work “good,” just say “I need to extract my standards”—that awareness puts you ahead of most founders.

You’ve taken the first swing at extracting your standards; the next article steps up a level to show how this fits into a 30-hour week architecture.

Up Next: The 30-Hour Week For $50K–$70K Founders

Next article covers “The 30-Hour Week: Systems That Run Your $50K–$75K Business Without You.”

Most operators at $50K–$70K/month work 60+ hours because they haven’t built real independence from their own capacity.

I’ll show you the system architecture that lets you step back to 30 hours while maintaining (or growing) revenue—the specific systems to build, the team structure that supports it, and why most “passive” business models fail.

FAQ: Clear Edge OS Quality Transfer System For Delegation

Q: How do I know if I need the Quality Transfer instead of just “hiring better people”?

A: You need it when you’re at $50K–$70K/month, working 60+ hours weekly, have already tried delegating once or twice, and saw quality drop enough to put $10K+ in monthly recurring revenue at risk.

Q: How does the Quality Transfer let me delegate 15–30 hours weekly without lowering standards?

A: It installs three moves—Document Excellence, Build Verification Systems, and Create Feedback Loops—so you transfer 18+ explicit standards, verify only what matters, and recalibrate monthly, which safely shifts 15–30 hours off your plate while holding 92–96% client satisfaction.

Q: How do I use Document Excellence so my team stops guessing what “good enough” means?

A: You extract your implicit standards into precise technical, judgment, client-facing, and edge-case criteria—like the 18-point design checklist that cut revisions from 3 to 1 and founder review time from 20–25 to 6–8 hours per month.

Q: How does the Quality Transfer reduce the risk of another $13,700 MRR scare like the failed junior consultant handoff?

A: Instead of handing off generic tasks (“handle strategy reports”), you hand off reports plus a 12-point quality checklist, client preferences, and escalation rules, which turns “guessing” into predictable output and removes the failure mode that put $13,700/month at risk.

Q: How do I build verification systems that keep quality high without spending 2 hours reviewing every deliverable?

A: You classify sections by risk and use sampling, checkpoints, and outcome-based checks so high-risk areas get full review, low-risk areas get spot checks, and review time falls from 90 minutes to roughly 22 minutes per proposal while maintaining ~98% client acceptance.

Q: How do I use peer verification so contractors and team members catch most issues before I see the work?

A: You pair team members to review each other’s deliverables against the checklist and only spot-check 10–20% yourself, like the course creator who cut video review from 26 to 4 hours monthly while keeping quality at 96% and reclaiming 22 hours of capacity.

Q: How do feedback loops stop quality from drifting down over 3–6 months after I delegate?

A: You run monthly 60–90 minute calibration sessions, spot audits, and exception reviews so the team regularly compares “excellent, acceptable, and below standard” work, updates checklists, and reverses drifts like 93% → 82% client satisfaction back up to 91% in 90 days.

Q: How do I separate real standards from perfectionism so I stop “fixing” 94% work to 97% at huge cost?

A: You define acceptable (90–94%) vs excellent (95%+) explicitly, reserve your time for the 20% of deliverables that truly require 95%+, and let the team own the rest, which is how one founder freed 67 hours monthly ($33,500 capacity) and added $11,400 MRR while accepting a 3–5 point quality gap.

Q: How much time and capacity can I realistically free by installing the Quality Transfer?

A: With 30 delegateable hours weekly, handing off just half (15 hours) yields about 65 hours monthly and $32,500 in capacity at a $500/hour rate, while full use of the framework across 3–5 deliverables typically unlocks 12–65 hours per month worth $6K–$32.5K.

Q: What does it actually cost if I avoid delegation for three years after one bad experience?

A: At 30 undelegated hours weekly and a $500/hour founder rate, you lose 120 hours and $60,000 in capacity each month—$720,000 per year and about $1.17 million in three-year capacity that could have gone into growth, time off, or new assets.

Navigate The Clear Edge OS Systems for Scaling From $5K to $150K

Start here: The Complete Clear Edge OS — Your roadmap from $5K to $150K with a 60-second constraint diagnostic.

Use daily: The Clear Edge Daily OS — Daily checklists, actions, and habits for all 26 systems.

LAYER 1: SIGNAL (What to Optimize)

The Signal Grid • The Bottleneck Audit • The Five Numbers

LAYER 2: EXECUTION (How to Optimize)

The Momentum Formula • The One-Build System • The Revenue Multiplier • The Repeatable Sale • Delivery That Sells • The 3% Lever • The Offer Stack • The Next Ceiling

LAYER 3: CAPACITY (Who Optimizes)

The Delegation Map • The Quality Transfer • The 30-Hour Week • The Exit-Ready Business • The Designer Shift

LAYER 4: TIME (When to Optimize)

Focus That Pays • The Time Fence

LAYER 5: ENERGY (How to Sustain)

The Founder Fuel System • $100K Without Burnout

INTEGRATION & MASTERY

The Founder’s OS • The Quarterly Wealth Reset

AMPLIFICATION (AI & Automation)

The Automation Audit • The Automation Stack

⚑ Found a Mistake or Broken Flow?

Use this form to flag issues in articles (math, logic, clarity) or problems with the site (broken links, downloads, access). This helps me keep everything accurate and usable. Report a problem →

› More to Explore: Quick Navigation · The Clear Edge OS

➜ Help Another Founder, Earn a Free Month

If this system helped you see how much capacity is tied up in avoidable delegation fears, share it with one founder who needs that clarity.

When you refer 2 people using your personal link, you’ll automatically get 1 free month of premium as a thank-you.

Get your personal referral link and see your progress here: Referrals

Get The Quality Transfer Toolkit

You’ve read the system. Now implement it.

Premium gives you:

Battle-tested PDF toolkit with every template, diagnostic, and formula pre-filled—zero setup, immediate use

Audio version so you can implement while listening

Unrestricted access to the complete library—every system, every update

What this prevents: Leaving 15–30 delegateable hours weekly and $390K–$1.17M in three-year capacity trapped behind undocumented standards.

What this costs: $12/month. The full Quality Transfer toolkit ships with this article.

Download everything today. Implement this week. Cancel anytime, keep the downloads.

Already upgraded? Scroll down to download the PDF and listen to the audio.